During my latest upgrade from vSphere 6.7 to vSphere v7 with one of my customers, I experienced some interesting errors.

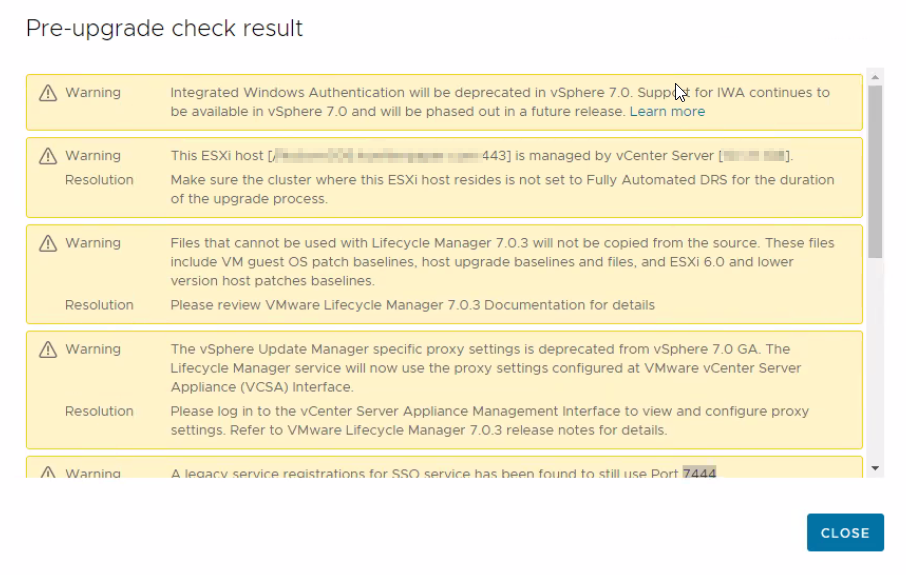

During an Upgrade Pre-Check we received the first errors:

Ok, we’ve deleted those Host profiles with lower versions. But the problem with the PNID (Primary Network Identifier) was a lot more fun.

We received an error “The source appliance FQDN must be the same as the source appliance primary network identifier.“

Our VCSA was previously a vCenter 5.5 Windows and has at the first deployment FQDN where hostname was with uppercase letters (HOSTNAME.domain.com). The upgrader complains if your FQDNs don’t match exactly, and in my case, it said that HOSTNAME.domain.com didn’t match hostname.domain.com. VMware says that if the FQDN and the PNID match in everything except the capitalization of some letters.

For example:

VCSA.domain.LOCAL

vs.

vcsa.domain.local

precheck will fail during the upgrade to vCenter Server 7.0 because this is caused by case sensitivity in the appliance management service. Now we’ve checked our PNID with the following command:

/usr/lib/vmware-vmafd/bin/vmafd-cli get-pnid --server-name localhost

Wee also checked our DNS and everything was in lowercase. We’ve tried the following workaround:

https://kb.vmware.com/s/article/84355

However, it didn’t help. As we had vSphere 6.7U3 I tried to change PNID (how I did it is described here):

How to change the FQDN and IP address (PNID) of Center Server Appliance – VIRTUALINCA

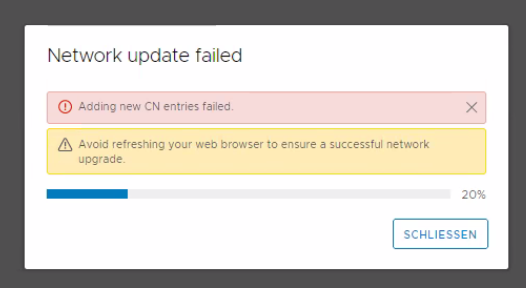

But guess what? We were still not able to change the PNID because during the process we received the next error message:

Adding CN entries failed.

Problem was, that if you have a similar PNID lowercase/uppercase but the same FQDN it ends with an error. So our workaround was to change the first PNID to a temporary created FQDN, and after the change succeeded we were able to change it again to the right FQDN and PNID without any problem. We also renewed the vCenter SSL certificate.

After rerunning the Pre-Check there were other problems, of course (A legacy service registration for SSO service has been found to still use Port 7444, A stale service registration has been found to be using Solution User configuration from vCenter 5.5).

So our next workaround was found at the VMware site with the following KB:

https://kb.vmware.com/s/article/79741

To resolve that issues you must download the ZIP file attached to the following article:

https://kb.vmware.com/s/article/80469

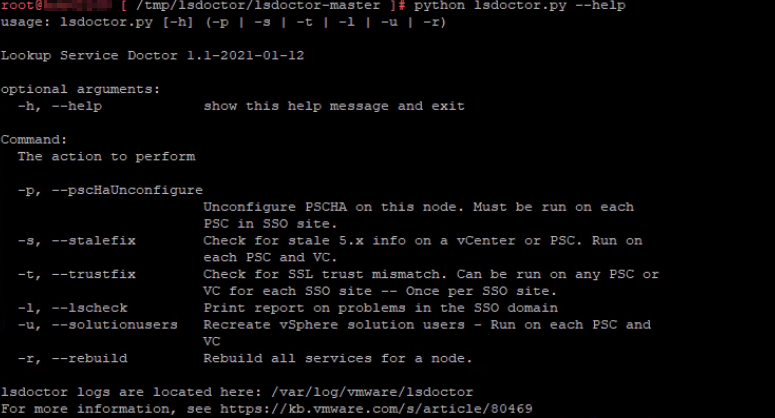

That article also describes how to use the Lookup Service Doctor tool. Then, use the file-moving utility of your choice (WinSCP in our case) to copy the entire ZIP directory to the node on which you wish to run it. Once the tool is copied to the system, unzip the file on VCSA:

Change your directory to the location of the file, and run the following command:

unzip lsdoctor.zip

Now be sure you are currently in the “lsdoctor-master” directory so you can run the tool. To run lsdoctor, use the following command:

python lsdoctor.py –help

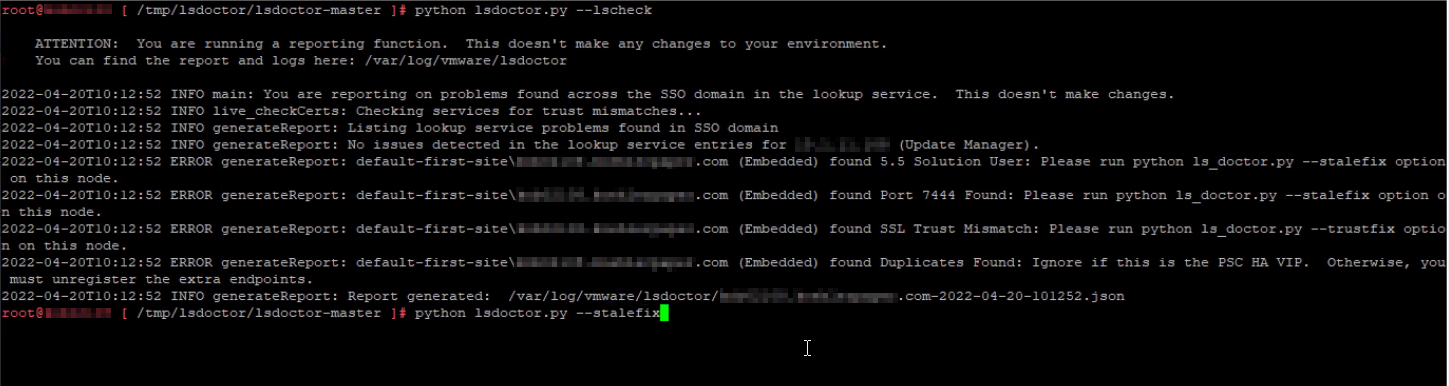

Now check for common issues in the lookup service. No worries, it does not make any changes to the environment. This will show issues found on any node in the SSO domain. See output for findings and path to JSON report.

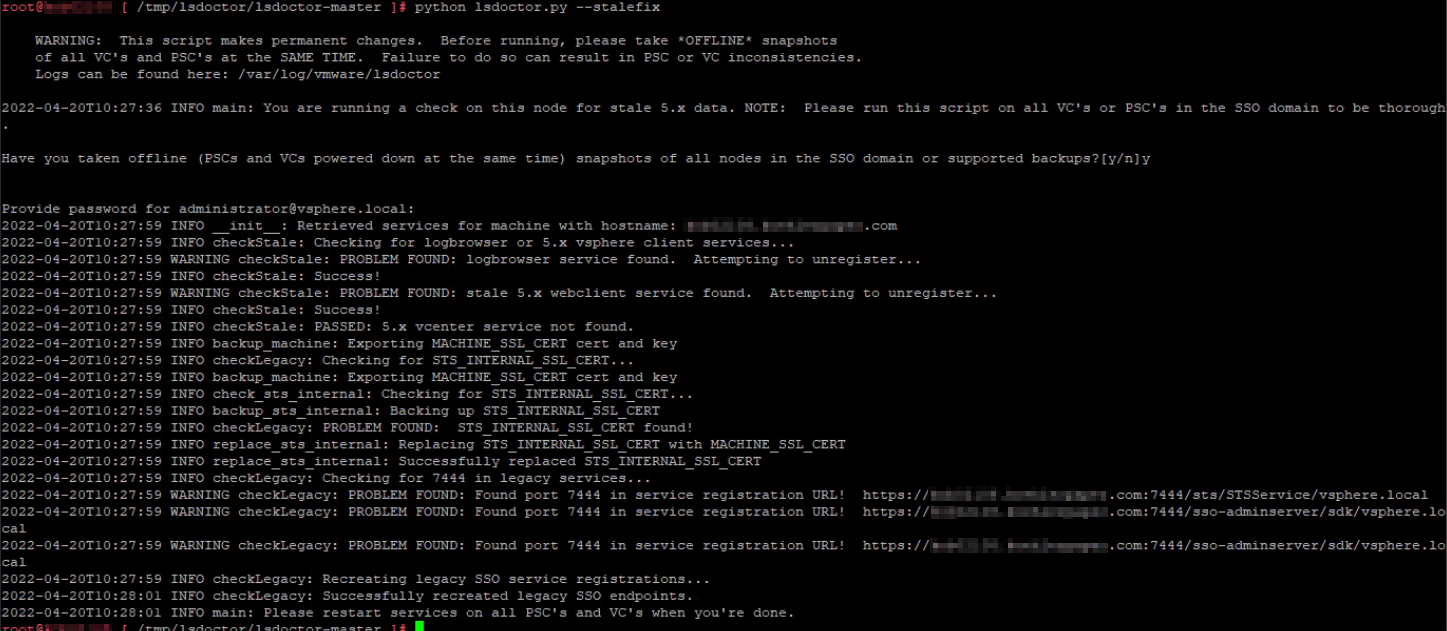

Now we follow output instructions and use stalefix option. This option cleans up any stale configurations leftover from a system upgraded from 5.x. Run the following command:

python lsdoctor.py -s

Verify that you have taken the appropriate snapshots and provide the password for your SSO administrator account.

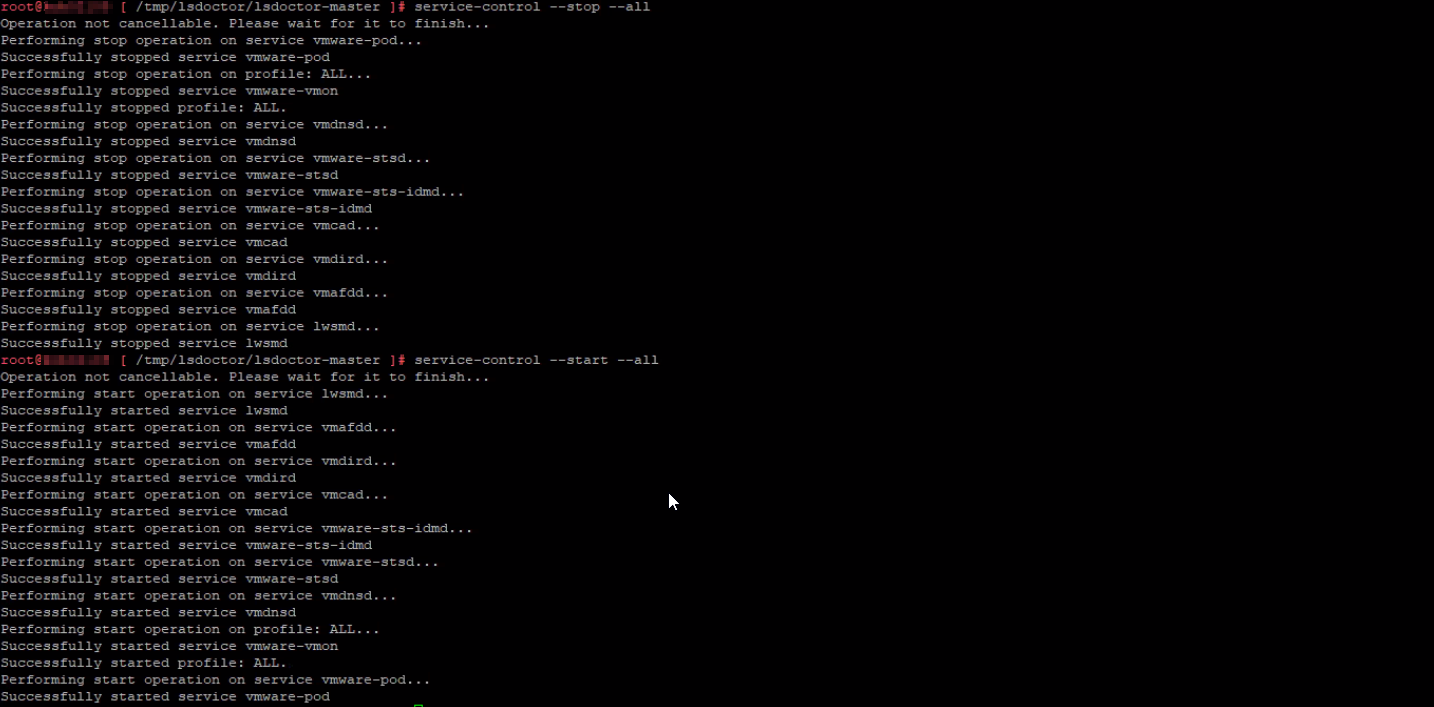

Once the script completes, restart all services:

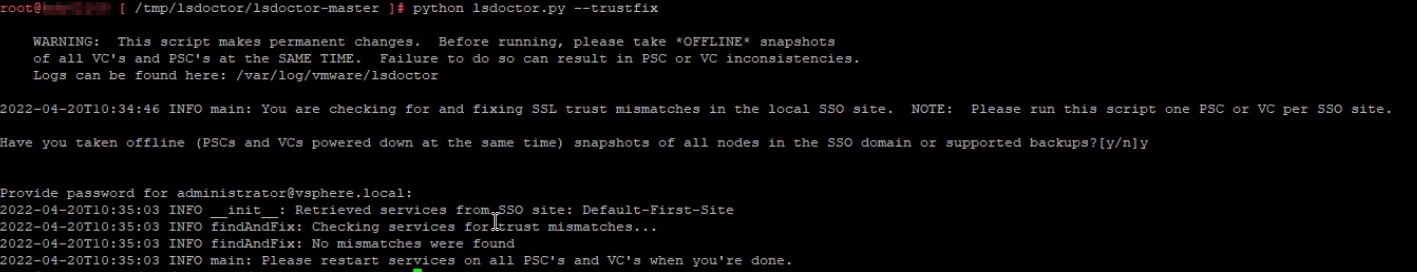

Next, we used trustfix option. This option corrects SSL trust mismatch issues in the lookup service. The lookup service registrations may have an SSL trust value that doesn’t match the MACHINE_SSL_CERT on port 443 of the node. This can be caused by a failure during certificate replacement, among other failures.

Same as previously done, verify that you have taken the appropriate snapshots and provide the password for your SSO administrator account. After the script is finished restart the services again same as done during stalefix option.

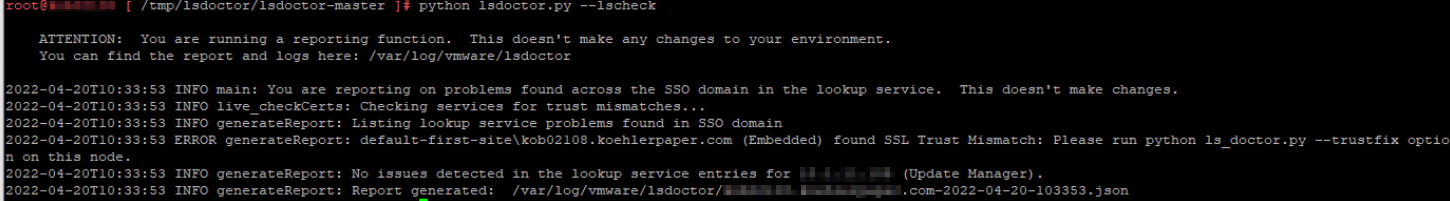

After almost all errors were resolved, we still had one error that wasn’t resolved with lsdoctor tool:

Although we replaced the SSL certificate, the check still reported that we try to resolve it with trustfix option. However, that was a false-positive and we safely ignored this warning and continued with the upgrade.

Finally, the Pre-Upgrade check was successful and all warnings and errors were gone, and we were able to finish the upgrade to Center Server v7.

Hope this can be helpful.